CAIRIS

CENIIT Project: Contents Associative Indexing and Retrieval of Image Sequences (CAIRIS)

Michael Felsberg

1 Project Background, Industrial Motivation, International Perspective

Many smaller TV broadcasting companies face the problem of managing their digitized broadcast material and of accessing suitable contributions from different media providers and agencies. One major problem is the management of the vast amount of information involved. Often a substantial amount of multimedia information (including audio, video and still images) has to be manually processed before the material can be compiled for a new broadcast project. An automatic content based processing of information would be desirable.

The range of media and devices, which can be addressed by basically the same technique, is much larger than just TV broadcast material and video-cut systems. The application area of content based retrieval also covers neighboring fields with similar requirements for storage and automated search. Examples are museums, schools, universities, digitizing historical archives, public video-sharing sites, and eventually also the home-user. The latter typically has the problem to organize images and videos without spending time on structuring databases. Images or sequences shall be retrieved by simple queries in text form or with examples. Public video-sharing cites as YouTube ( http://www.youtube.com/ ) typically have the problem to identify copyrighted material to avoid copyright infringements. Today this is done by acoustic fingerprints, see http://en.wikipedia.org/wiki/YouTube#Use_of_acoustic_fingerprints

.

From a scientific perspective, the main bottleneck of these applications is a lack of efficient and robust recognition or association methods. Robust recognition of arbitrary objects in uncontrolled environments is a still unsolved problem, in particular if the implementation has to be useable on a standard PC. For associative access, i.e., presenting a typical instance of an object for the query, methods tend to be much more robust than for recognition. State of the art methods suffer, however, from the computational load for comparing image data. One of the most advanced systems in the literature is described in [ 1 ].

1.1 State of the Art

The main trend of current research in the field of video content-based retrieval is to complement or replace the currently typically textual access by descriptors derived from the visual content of the data. A number of national and international programs in this area have been announced recently. As example we mention the following:

- Quaero (France and Germany) http://en.wikipedia.org/wiki/Quaero

- Centre for Research-based Innovation: Information Access Disruptions (Norway)

- Trecvid (US) http://www-nlpir.nist.gov/projects/trecvid/

Due to experiences in the field [ 3 ], people in the area tend to create more adaptive systems [ 4 ] and advanced indexing rules [ 5 , 6 , 7 ], to apply learning techniques for retrieval [ 8 , 9 ], and recognition [ 10 ].

2 Research Context

Our Computer Vision Laboratory has a strong background in the areas of image descriptors and learning techniques for recognition, see e.g. [ 11 , 12 ]. We have participated in an earlier project on contents based search in image and video databases, sponsored by SSF during the years 1997-2003. We received an invitation by Alta Vista to engage in an image databse project before their economic downturn.

In our ongoing FP6 project COSPAL, cf. http://www.cospal.org , we have developed a large amount of relevant techniques, in particular for information representation for efficient further processing [ 13 , 14 ]. Within the ongoing FP6 project MATRIS, cf. http://www.ist-matris.org as well as in an IVSS project, cf. http://www.ivss.se/templates/ProjectPage.aspx?id=156 , we acquired competence and experience in real-time processing and handling of huge data-sets.

The CAIRIS project is placed within this context of competences, giving all needed resources from scientific discussions up to the availability of software.

3 Project Perspective

During the CAIRIS project, we will try to establish the methods developed within CAISIR as state of the art in the scientific literature for video retrieval and the corresponding implementations as basis for further system development. We will try to gather further projects in the vicinity of CAISIR in order to establish a whole group of people and projects around the topic of high-volume, high-performance visual information representation, learning, and cognition. In the long run we aim at establishing a whole lab on this topic, which we see as the natural continuation of classical computer vision.

4 Related CENIIT Projects

The most relevant CENIIT project for this project is: Computational color processing (Reiner Lenz, 1998-2003). In this project, group theoretical methods were used to replace the RGB color-space with models that are more invariant to, e.g., illumination changes or different devices to create the image data [ 15 , 16 ]. One application of the framework has been the illumination independent search in still image databases together with Matton AB in Stockholm, cf. http://www.matton.se [ 17 , 18 ] and also [ 19 ].

5 Industrial Contacts

The CAIRIS project has emerged from discussions with industrial partners and other research institutions (see also workplan below). For CAIRIS itself, the direct contact on industrial side is the company Focus Enhancements Inc. http://www.focusinfo.com/ respectively their European Headquarter COMO Computer & Motion GmbH.

6 Workplan

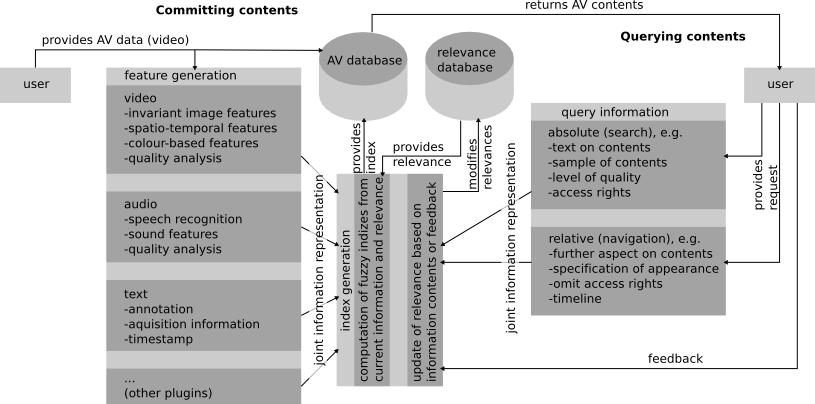

The CAIRIS project is planned as part of a larger attempt to build a whole system for video retrieval, see Fig. 1 . As pointed out earlier, the recognition and association of contents is the bottleneck of current systems and it is the scientifically most interesting part, at least within our field of work. CAIRIS is an attempt to develop a new recognition and association based approach to contents based access.

Within the system, the user supplies information that supports to improve the retrieval process: The user formulates queries, chooses or navigates between different alternatives, and assesses the system responses, i.e., we can use the principle of relevance feedback to improve the retrieval process. This is however only possible if the retrieval method is sufficiently flexible to adapt to the feedback. For this purpose, we divide the indexing process into two step: Pre-indexing and late associations. In order to allow navigation within the query results, the pre-indexing must be topology-preserving in a sense that similar key-frames (from the user point of view) are mapped to compact subspaces of the index-space.

6.1 Generic Features for Pre-Indexing

When audio-visual data is entered into the database its content has to be characterized by descriptors of its content. Video streams can be characterized by key-frames. For these key-frames, low-level features are computed by fast signal processing algorithms. These features are then locally clustered, presumably by means of P-channels [ 13 ]. These clusters describe local statistical properties of the low-level features and are later used for representing the key-frames in the recognition or association process. Hence, the P-channel features establish a pre-index of the image sequence.

First experiments within the COSPAL project and the MATRIS project showed that P-channel encoded simple features as color (RGB) and orientation can be used for object recognition respectively scene registration in realtime. The results of these experiments show that this type of feature representation is invariant to local variations of the scene, e.g., local occlusions, changes of lighting conditions, mild changes of view angle, and mild changes of scale.

For the CAIRIS project it is planned to analyze the P-channel feature representation further. In particular, we will

- quantize the robustness with respect to the local scene variations mentioned above,

- switch to more suitable color models (see Sect. 4 ),

- analyze the impact of the different parameters of the method,

- improve the implementation to meet computational requirements; the current implementation is a mixture of Matlab and C code.

This is planned to be done under the first project year. Concerning the quantization of the robustness and the parameter analysis -- these two points are strongly coupled -- we expect the work to continue into the second project year, in particular concerning publications.

Further work presumably for the second year will be the generation of spatio-temporal features into the pre-indexing. This will then include motion vectors and discontinuity detection (cut detection). The required new features can easily be incorporated into the P-channel representation. Note also that the results from this part of the project can also be used in other areas as for instance object recognition in vision based robotics.

6.2 Late Associations for Retrieval and Recognition

In order to take into account the statistics within the retrieval or query process, a dynamic indexing is required. As pointed out above, it is not feasible to recreate the index for the whole database, but due to the pre-indexing it becomes possible to virtually change the indexing. This is done by changing the association between the pre-indexing and the queries dynamically in terms of learning a mapping between the pre-index and the query space. Since the final indexing is hence done at the time of the query in terms of a content-based query, we call this technique late association .

Although the pre-indexing leads to a reduction of data by three orders of magnitude (for each key-frame), it is still unfeasible to recompute the whole association function. This is a point where another technique developed in context of the COSPAL project could be beneficial: incremental learning [ 20 ]. In this context, a number of scientific challenges have to be faced:

- How to change the learning rule from [ 12 ] to make it suitable for P-channels?

- In particular: which sparsity constraint leads to the best method?

- How to reformulate the learning problem to incorporate reinforcement and relevance feedback?

- How to generalize incremental learning to the new learning problem resulting from the previous three points?

- How to process and store huge association networks in a hierarchical or tree-based way?

These challenges are addressed in the light of the application we have in mind in context of CAIRIS, but they are relevant in many other areas where we have huge amounts of data to process in order to get associative access to the data. Possible other applications are in the areas of surveillance and autonomous systems.

7 Results

The project has started July 1 2007 with two main tracks of research, both aiming at developing methods for fast generation of features for the pre-indexing, cf. Sect. 6.1 :

- bundle adjustment for segmenting rigid bodies

- fast recognition of scenes

Since the project start, we have started to port several required image processing routines to the GPU. During the three months of work, we have implemented the following methods:

- resolution pyramids (Gaussian pyramids)

- KLT tracking (least-squares patch matching)

- POI detectors (corner detector)

- Edge detection and grouping algorithm

The implementations are still under evaluation and publishable results are to be expected soon. In particular the novel edgel-grouping method also introduces new methodological aspects.

For the fast recognition of scenes, the P-channel methods has been improved and implemented in the OpenCV framework http://sourceforge.net/projects/opencv/ . The method for recognition of scenes is solely based on the visual appearance of the scene and runs in video real-time. Currently, the method is frame-based, i.e., each single frame is compared to the stored views in the database. Still, recognition rates are about 96%, which is sufficient for video-retrieval applications if we require temporal consistent recognition over a few frames. The method has been published in the Journal of Real-Time Image Processing DOI 10.1007/s11554-007-0044-y .

We are right now extending the method for taking into account the temporal domain as well in order to model ego-motion effects and continuous variations of the scene. Furthermore, the database organization has to be changed to a tree-structure in order to achieve sub-linear search times. The sampling scheme within the P-channel representation has to be modified to be more adaptive to the contents. A 1D version of an adaptive resampling algorithm has been developed and is under evaluation. Publishable results are expected soon.

Publications

- [FH1] M. Felsberg and J. Hedborg. Real-Time View-Based Pose Recognition and Interpolation for Tracking Initialization. Journal of Real-Time Image Processing , 2(2-3):103-116, 2007.

-

- [FG1] M. Felsberg and G. Granlund. Fusing Dynamic Percepts and Symbols in Cognitive Systems. International Conference on Cognitive Systems , 2008.

-

- [F1] M. Felsberg. On the Relation Between Anisotropic Diffusion and Iterated Adaptive Filtering. Pattern Recognition - DAGM 2008 , Springer LNCS 5096, 436-445, 2008.

-

- [FKK1] M. Felsberg, S. Kalkan, and N. Krüger. Continuous Dimensionality Characterization of Image Structures. Image and Vision Computing , accepted for publication in a future issue.

-

- [F2] M. Felsberg. On Second Order Operators and Quadratic Operators. International Conference on Pattern Recognition , 2008.

- [1] Stepan Obdrzalek and Jiri Matas. Sub-linear indexing for large scale object recognition. In W. F. Clocksin, A. W. Fitzgibbon, and P. H. S. Torr, editors, BMVC 2005: Proceedings of the 16th British Machine Vision Conference , volume 1, pages 1--10, London, UK, September 2005. BMVA.

-

- [2] X. S. Zhou and T. S. Huang. Relevance feedback in image retrieval: A comprehensive review. Multimedia Systems , 8:536--544, 2003.

-

- [3] Alexander G. Hauptmann. Lessons for the future from a decade of informedia video analysis research. In CIVR 2005 , volume 3568 of LNCS , pages 1--10. Springer Berlin, 2005.

-

- [4] G. J. F. Jones. Adaptive systems for multimedia information retrieval. In AMR 2003 , volume 3094 of LNCS , pages 1--18, 2004.

-

- [5] M. Detyniecki. Adaptive discovery of indexing rules for video. In AMR 2003 , volume 3094 of LNCS , pages 176--184, 2004.

-

- [6] P. Tzouveli, G. Andreou, G. Tsechpenakis, Y. Avrithis, and S. Kollias. Intelligent visual descriptor extraction from video sequences. In AMR 2003 , volume 3094 of LNCS , pages 132--146, 2004.

-

- [7] Cees G.M. Snoek, Marcel Worring, Jan-Mark Geusebroek, Dennis C. Koelma, Frank J. Seinstra, and Arnold W.M. Smeulders. The semantic pathfinder: Using an authoring metaphor for generic multimedia indexing. IEEE Trans. Pattern Analysis and Machine Intelligence , 28(10):1678--1689, 2006.

-

- [8] A. D. Lattner, A. Miene, and O. Herzog. A combination of machine learning and image processing technologies for the classification of image regions. In AMR 2003 , volume 3094 of LNCS , pages 185--199, 2004.

-

- [9] K.-M. Lee. Neural network-generated image retrieval and refinement. In AMR 2003 , volume 3094 of LNCS , pages 200--211, 2004.

-

- [10] R. Fergus, L. Fei-Fei, P. Perona, and A. Zisserman. Learning object categories from google's image search. In Proc. of 10th Intl. Conf. on Computer Vision , 2005.

-

- [11] G. H. Granlund and A. Moe. Unrestricted recognition of 3-d objects for robotics using multi-level triplet invariants. Artificial Intelligence Magazine , To appear 2004.

-

- [12] Björn Johansson, Tommy Elfving, Vladimir Kozlov, Yair Censor, Per-Erik Forssen, and Gösta Granlund. The application of an oblique-projected landweber method to a model of supervised learning. Mathematical and Computer Modelling , 43:892--909, 2006.

-

- [13] Michael Felsberg and Gösta Granlund. P-channels: Robust multivariate m-estimation of large datasets. In International Conference on Pattern Recognition , Hong Kong, August 2006.

-

- [14] M. Felsberg, P.-E. Forssen, and H. Scharr. Channel smoothing: Efficient robust smoothing of low-level signal features. IEEE Transactions on Pattern Analysis and Machine Intelligence , 28(2):209--222, 2006.

-

- [15] R. Lenz. Two stage principal component analysis of color. IEEE Transactions Image Processing , 11(6):630--635, 2002.

-

- [16] R. Lenz, T. H. Bui, and J. Hernandez-Andres. Group theoretical structure of spectral spaces. Journal of Mathematical Imaging and Vision , 23:297--313, 2005.

-

- [17] L. Viet Tran. Efficient Image Retrieval with Statistical Color Descriptors . PhD thesis, Linköping University, Department of Science and Technology, 2003.

-

- [18] T.H. Bui. Group-Theoretical Structure in Multispectral Color and Image Databases . PhD thesis, Linköping University, 2005.

-

- [19] L. V. Tran and R. Lenz. Compact colour descriptors for colour-based image retrieval. Signal Processing , 85(2):233--246, 2005.

-

- [20] Erik Jonsson, Michael Felsberg, and Gösta Granlund. Incremental associative learning. Technical Report LiTH-ISY-R-2691, Dept. EE, Linköping University, Sept 2005.

References

Senast uppdaterad: 2014-03-21

LiU Homepage

LiU Homepage