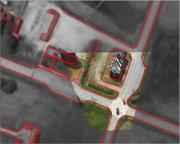

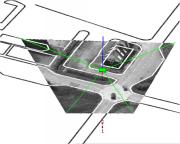

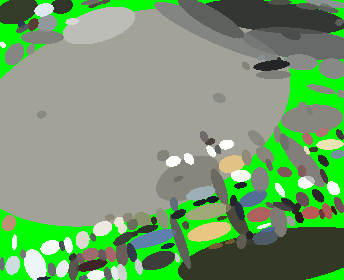

Wallenberg laboratory on Information Technology and Autonomous SystemsThe Wallenberg Laboratory on Information Technology and Autonomous Systems (WITAS) consists of three research groups at the Department of Computer and Information Science, and Computer Vision Laboratory. WITAS has been engaged in goal-directed basic research in the area of intelligent autonomous vehicles and other autonomous systems since 1997. The major goal is to demonstrate, before the end of the year 2003, an airborne computer system which is able to make rational decisions about the continued operation of the aircraft, based on various sources of knowledge including pre-stored geographical knowledge, knowledge obtained from vision sensors, and knowledge communicated to it by radio. CVL project in WITASThe project manager for the Computer Vision Laboratory's part of WITAS is Prof. Gösta Granlund.For the WITAS project, the ambition has been to develop a powerful and flexible generic vision structure, which would be suitable for robotics purposes in general. This effort builds upon and extends the Research on Cognitive Vision, which has been ongoing at CVL for several years. The demands of Robotics Systems have motivated the development of a new Recognition Structure, which is intended to be used consistently for recgnition of objects, relations between objects, scenes, navigation, etc. This structure is used for navigation in the WITAS vehicle. The Recognition Structure uses a new architecture for learning systems, which has been developed. outside the WITAS project, but has been tested for different applications within the project, [gfj03] This architecture uses a monopolar channel information representation, [gg2000g]. An itemized description of the vision procedures implemented, has been given separately. In addition, the vision structure includes the use of available procedures, both from our own lab, as well as from other labs. Another ambition has been to develop a systems structure which would allow an easy and tight integration environment between vision procedures as well as other functions. The hardware/software module dedicated to the execution of image processing operations is collectively known as the Image Processing Module (IPM). Due to the constraints associated with scenarios of the type described, an important operational requirement of the IPM is that it is highly flexible and reconfigurable. The idea is that the UAV should be able to switch between different modes of operations, where each mode may require a different configuration of the IPM. The operations implemented in IPM and IPAPI are discussed separately. PublicationsWITAS documents from CVL |

|

Last updated: 2014-03-21

LiU startsida

LiU startsida